As such, you need to design your AR experience to keep the user’s hands within the headset’s area of recognition and work with each headset’s specific set of gestures. Educating the user about the area of recognition for gestures — and notifying users when their gestures are near the boundaries — can help create a more successful user experience.

Because this way of interaction is new to nearly everyone, keeping interactions as simple as possible is important. Most of your users will already be undergoing a learning curve for interacting in AR, figuring out the gestures for their specific device (because a universal AR gesture set has yet to be developed). Most AR headsets that utilize hand tracking come with a standard set of core gestures. Try to stick to these prepackaged gestures and avoid overwhelming your users by introducing new gestures specific to your application.

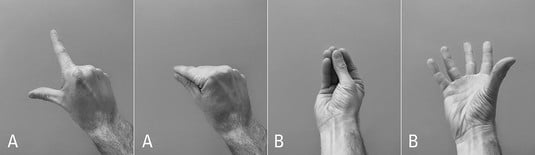

The image below gives examples of the two core gestures for HoloLens, the “Air Tap” (A) and “Bloom” (B). An Air Tap is similar to a mouse click in a standard 2D screen. A user holds his finger in the ready position and presses his finger down to select or click the item targeted via user gaze. The “Bloom” gesture is a universal gesture to send a user to the Start menu. A user holds his fingertips together and then opens his hand.

Microsoft’s example of a user performing an “Air Tap” (A) and a “Bloom” (B).

Microsoft’s example of a user performing an “Air Tap” (A) and a “Bloom” (B).Grabbing an object in the real world gives a user feedback such as the feel of the object, the weight of the object in his hand, and so on. Hand gestures made to select virtual holograms will provide the user with none of this standard tactile feedback. So it’s important to notify the user about the state of digital holograms in the environment in different ways.

Provide the user cues as to the state of an object or the environment, especially as the user tries to place or interact with digital holograms. For example, if your user is supposed to place a digital hologram in 3D space, providing a visual indicator can help communicate to her where the object will be placed. If the user can interact with an object in your scene, you may want to visually indicate that on the object, potentially using proximity to alert the user that she’s approaching an object she can interact with. If your user is trying to select one object among many, highlight the item she currently has selected and provide audio cues for her actions.

This image shows how the Meta 2 chooses to display this feedback to the user. A circle with a ring appears on the back of a user’s hand as he approaches an interactive object (A). As the user’s hand closes to a fist, the ring becomes smaller (B) and draws closer to the center circle. A ring touching the circle indicates a successful grab (C). A user’s hand moving near to the edge of the sensor is also detected and flagged via a red indicator and warning message (D).

A screen capture of in-headset view of a Meta 2 drag interaction.

A screen capture of in-headset view of a Meta 2 drag interaction.Mobile device interaction in AR apps

Many of the design principles for AR apply to both headsets and mobile experiences. However, there is a considerable difference between the interactive functionality of AR headsets and mobile AR experiences. Because of the form factor differences between AR headsets and AR mobile devices, interaction requires some different rules.Keeping interactions simple and providing feedback when placing or interacting with an object are rules that apply to both headset and mobile AR experiences. But most interaction for users on mobile devices will take place through gestures on the touchscreen of the device instead of users directly manipulating 3D objects or using hand gestures in 3D space.

A number of libraries, such as ManoMotion, can provide 3D gesture hand tracking and gesture recognition for controlling holograms in mobile AR experiences. These libraries may be worth exploring depending on the requirements of your app. Just remember that your user will likely be holding the device in one hand while experiencing your app, potentially making it awkward to try to also insert her other hand in front of a back-facing camera.

Your users likely already understand mobile device gestures such as single-finger taps, drags, two-finger pinching and rotating, and so on. However, most users understand these interactions in relation to the two-dimensional world of the screen instead of the three dimensions of the real world.After a hologram is placed in space, consider allowing movement of that hologram in only two dimensions, essentially allowing it to only slide across the surface upon which it was placed. Similarly, consider limiting the object rotation to a single axis. Allowing movement or rotation on all three axes can quickly become very confusing to the end user and result in unintended consequences or placement of the holograms.

If you’re rotating an object, consider allowing rotation only around the y-axis. Locking these movements prevents your user from inadvertently shifting objects in unpredictable ways. You may also want to create a method to “undo” any unintentional movement of your holograms, as placing these holograms in real-world space can be challenging for your users to get right.

Most mobile devices support a “pinch” interaction with the screen to either zoom in on an area or scale an object. Because a user is in a fixed point in space in both the real world and the hologram world, you probably won’t want to utilize this gesture for zooming in AR.

Similarly, consider eliminating a user’s ability to scale an object in AR. A two-fingered pinch gesture for scale is a standard interaction for mobile users. In AR, this scale gesture often doesn’t make sense. AR hologram 3D models are often a set size. The visual appearance of the size of the 3D model is influenced by the distance from the AR device. A user scaling an object in place to make the object look closer to the camera is really just making the object larger in place, often not what the user intended. Pinch-to-scale may still be used in AR, but its usage should be thoughtfully considered.

Voice interaction in AR apps

Some AR devices also support voice interaction capabilities. Although the interaction for most AR headsets is primarily gaze and gestures, for those headsets with voice capabilities you need to consider how to utilize all methods of interaction and how to make them work well together. Voice controls can be a very convenient way to control your application. As processing power grows exponentially, expect voice control to be introduced and refined further on AR headsets.Here are some things to keep in mind as you develop voice commands for AR devices that support this feature:

- Use simple commands. Keeping your commands simple will help avoid potential issues of users speaking with different dialects or accents. It also minimizes the learning curve of your application. For example, “Read more” is likely a better choice than “Provide further information about selected item.”

- Ensure that voice commands can be undone. Voice interactions can sometimes be triggered inadvertently by capturing audio of others nearby. Make sure that any voice command can be undone if an accidental interaction is triggered.

- Eliminate similar-sounding interactions. In order to prevent your user from triggering incorrect actions, eliminate any spoken commands that may sound similar but perform different actions. For example, if “Read more” performs a particular action in your application (such as revealing more text), it should always perform the same interaction throughout your application. Similar-sounding commands should also be avoided. For example, “Open reference” and “Open preferences” are far too likely to be mistaken for each other.

- Avoid system commands. Make sure your program doesn’t override voice commands already reserved by the system. If a command such as “home screen” is reserved by the AR device, don’t reprogram that command to perform different functionality within your application.

- Provide feedback. Voice interactions should provide the same level of feedback cues to a user that standard interaction methods do. If a user is utilizing voice commands, provide feedback that your application heard and understood the command. One method of doing so would be to provide onscreen text of what commands the system interpreted from the user. This will provide the user with feedback as to how the system is understanding his commands and allow him to adjust his commands if needed.