If the last network layer is a softmax layer, the network outputs the probability of the photo containing a dog or a cat (the two classes you trained it to recognize) and the output sums to 100 percent. When the last layer is a sigmoid-activated layer, you obtain scores that you can interpret as probabilities of content belonging to each class, independently. The scores won’t necessarily sum to 100 percent. In both cases, the classification may fail when the following occurs:

- The main object isn’t what you trained the network to recognize, such as presenting the example neural network with a photo of a raccoon. In this case, the network will output an incorrect answer of dog or cat.

- The main object is partially obstructed. For instance, your cat is playing hide and seek in the photo you show the network, and the network can’t spot it.

- The photo contains many different objects to detect, perhaps including animals other than cats and dogs. In this case, the output from the network will suggest a single class rather than include all the objects.

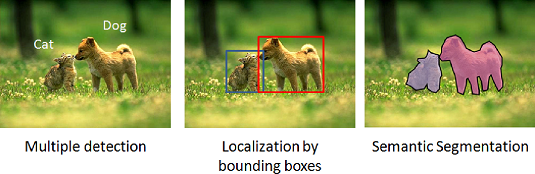

- Detection: Determining when an object is present in an image. Detection is different from classification because it involves just a portion of the image, implying that the network can detect multiple objects of the same and of different types. The capability to spot objects in partial images is called instance spotting.

- Localization: Defining exactly where a detected object appears in an image. You can have different types of localizations. Depending on granularity, they distinguish the part of the image that contains the detected object.

- Segmentation: Classification of objects at the pixel level. Segmentation takes localization to the extreme. This kind of neural model assigns each pixel of the image to a class or even an entity. For instance, the network marks all the pixels in a picture relative to dogs and distinguishes each one using a different label (called instance segmentation).

Detection, localization and segmentation example from the Coco dataset.

Detection, localization and segmentation example from the Coco dataset.

Performing localization with convolutional neural networks

Localization is perhaps the easiest extension that you can get from a regular CNN. It requires that you train a regressor model alongside your deep learning classification model. A regressor is a model that guesses numbers. Defining object location in an image is possible using corner pixel coordinates, which means that you can train a neural network to output key measures that make it easy to determine where the classified object appears in the picture using a bounding box. Usually a bounding box uses the x and y coordinates of the lower-left corner, together with the width and the height of the area that encloses the object.Classifying multiple objects with convolutional neural networks

A CNN can detect (predicting a class) and localize (by providing coordinates) only a single object in an image. If you have multiple objects in an image, you may still use a CNN and locate each object present in the picture by means of two old image-processing solutions:- Sliding window: Analyzes only a portion (called a region of interest) of the image at a time. When the region of interest is small enough, it likely contains only a single object. The small region of interest allows the CNN to correctly classify the object. This technique is called sliding window because the software uses an image window to limit visibility to a particular area (the way a window in a home does) and slowly moves this window around the image. The technique is effective but could detect the same image multiple times, or you may find that some objects go undetected based on the window size that you decide to use to analyze the images.

- Image pyramids: Solves the problem of using a window of fixed size because it generates increasingly smaller resolutions of the image. Therefore, you can apply a small sliding window. In this way, you transform the objects in the image, and one of the reductions may fit exactly into the sliding window used.

The sliding window and image pyramid have inspired deep learning researchers to discover a couple of conceptually similar approaches that are less computationally intensive. The first approach is one-stage detection. It works by dividing the images into grids, and the neural network makes a prediction for every grid cell, predicting the class of the object inside.

The prediction is quite rough, depending on the grid resolution (the higher the resolution, the more complex and slower the deep learning network). One-stage detection is very fast, having almost the same speed as a simple CNN for classification. The results have to be processed to gather the cells representing the same object together, and that may lead to further inaccuracies. Neural architectures based on this approach are Single-Shot Detector (SSD), You Only Look Once (YOLO), and RetinaNet. One-stage detectors are very fast, but not so precise.

The second approach is two-stage detection. This approach uses a second neural network to refine the predictions of the first one. The first stage is the proposal network, which outputs its predictions on a grid. The second stage fine-tunes these proposals and outputs a final detection and localization of the objects. R-CNN, Fast R-CNN, and Faster R-CNN are all two-stage detection models that are much slower than their one-stage equivalents, but more precise in their predictions.

Annotating multiple objects in images with convolutional neural networks

To train deep learning models to detect multiple objects, you need to provide more information than in simple classification. For each object, you provide both a classification and coordinates within the image using the annotation process, which contrasts with the labeling used in simple image classification.Labeling images in a dataset is a daunting task even in simple classification. Given a picture, the neural network must provide a correct classification for the training and test phases. In labeling, the network decides on the right label for each picture, and not everyone will perceive the depicted image in the same way. The people who created the ImageNet dataset used the classification provided by multiple users from the Amazon Mechanical Turk crowdsourcing platform (ImageNet used the Amazon service so much that in 2012, it turned out to be Amazon’s most important academic customer.)

In a similar way, you rely on the work of multiple people when annotating an image using bounding boxes. Annotation requires that you not only label each object in a picture but also must determine the best box with which to enclose each object. These two tasks make the annotation even more complex than labeling and more prone to producing erroneous results. Performing annotation correctly requires the work of more people who can provide a consensus on the accuracy of the annotation.

Some open source software can help in annotation for image detection (as well as for image segmentation). Two tools are particularly effective:

- LabelImg, created by TzuTa Lin with a YouTube tutorial.

- LabelMe is a powerful tool for image segmentation that provides an online service.

- FastAnnotationTool, based on the computer vision library OpenCV. The package is less well maintained but still viable.

Segmenting images with convolutional neural networks

Semantic segmentation predicts a class for each pixel in the image, which is a different perspective from either labeling or annotation. Some people also call this task dense prediction because it makes a prediction for every pixel in an image. The task doesn’t specifically distinguish different objects in the prediction.For instance, a semantic segmentation can show all the pixels that are of the class cat, but it won’t provide any information about what the cat (or cats) are doing in the picture. You can easily get all the objects in a segmented image by post-processing, because after performing the prediction, you can get the object pixel areas and distinguish between different instances of them, if multiple separated areas exist under the same class prediction.

Different deep learning architectures can achieve image segmentation. Fully Convolutional Networks (FCNs) and U-NETs are among the most effective. FCNs are built for the first part (called the encoder), which is the same as CNNs. After the initial series of convolutional layers, FCNs end with another series of CNNs that operate in a reverse fashion as the encoder (making them a decoder). The decoder is constructed to recreate the original input image size and output as pixels the classification of each pixel in the image. In such a fashion, the FCN achieves the semantic segmentation of the image. FCN are too computationally intensive for most real-time applications. In addition, they require large training sets to learn their tasks well; otherwise, their segmentation results are often coarse.

Finding the encoder part of the FCN pretrained on ImageNet, which accelerates training and improves learning performance, is common.

U-NETs are an evolution of FCN devised by Olaf Ronneberger, Philipp Fischer, and Thomas Brox in 2015 for medical purposes. U-NETs present advantages compared to FCNs. The encoding (also called contraction) and the decoding parts (also referred to as expansion) are perfectly symmetric. In addition, U-NETs use shortcut connections between the encoder and the decoder layers. These shortcuts allow the details of objects to pass easily from the encoding to the decoding parts of the U-NET, and the resulting segmentation is precise and fine-grained.Building a segmentation model from scratch can be a daunting task, but you don’t need to do that. You can use some pretrained U-NET architectures and immediately start using this kind of neural network by leveraging the segmentation model zoo (a term used to describe the collection of pretrained models offered by many frameworks) offered by segmentation models, a package offered by Pavel Yakubovskiy. You can find installation instructions, the source code, and plenty of usage examples at GitHub. The commands from the package seamlessly integrate with Keras.