You can run the Student t tests using typical statistical software and interpret the output produced. In this example, you'll be using the software package OpenStat.

The basic idea of a t test

All the Student t tests for comparing sets of numbers are trying to answer the same question, "Is the observed difference larger than what you would expect from random fluctuations alone?" The t tests all answer this question in the same general way, which you can think of in terms of the following steps:

Calculate the difference (D) between the groups or the time points.

Calculate the precision of the difference (the magnitude of the random fluctuations in that difference), in the form of a standard error (SE) of that difference.

Calculate a test statistic (t), which expresses the size of the difference relative to the size of its standard error.

That is: t = D/SE.

Calculate the degrees of freedom (df) of the t statistic.

Degrees of freedom is a tricky concept; as a practical matter, when dealing with t tests, it's the total number of observations minus the number of means you calculated from those observations.

Calculate the p value (how likely it is that random fluctuations alone could produce a t value at least as large as the value you just calculated) using the Student t distribution.

The Student t statistic is always calculated as D/SE; each kind of t test (one-group, paired, unpaired, Welch) calculates D, SE, and df in a way that makes sense for that kind of comparison, as summarized here.

| One-Group | Paired | Unpaired t Equal Variance | Welch t Unequal Variance | |

|---|---|---|---|---|

| D | Difference between mean of observations and a hypothesized value (h) | Mean of paired differences | Difference between means of the two groups | Difference between means of the two groups |

| SE | SE of the observations | SE of paired differences | SE of difference, based on a pooled estimate of SD within each group | SE of difference, from SE of each mean, by propagation of errors |

| df | Number of observations — 1 | Number of pairs — 1 | Total number of observations — 2 | "Effective" df, based on the size and SD of the two groups |

Running a t test

Almost all modern statistical software packages can perform all four kinds of t tests. Preparing your data for a t test is quite easy:

For the one-group t test, you need only one column of data, containing the variable whose mean you want to compare to the hypothesized value (H). The program usually asks you to specify a value for H and assumes 0 if you don't specify it.

For the paired t test, you need two columns of data representing the pair of numbers (before and after, or the two matched subjects). For example, if you're comparing the before and after values for 20 subjects, or values for 20 sets of twins, the program will want to see a data file with 20 rows and two columns.

For the unpaired test (Student t or Welch), most programs want you to have all the measured values in one variable, in one column, with a separate row for every observation (regardless of which group it came from).

So if you were comparing test scores between a group of 30 subjects and a group of 40 subjects, you'd have a file with 70 rows and 2 columns. One column would have the test scores, and the other would have a numerical or text value indicating which group each subject belonged to.

Interpreting the output from a t test

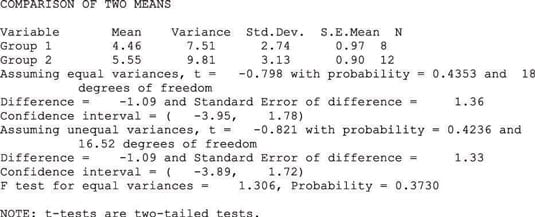

The figure shows the output of an unpaired t test from the OpenStat program. Other programs usually provide the same kind of output, although it may be arranged and formatted differently.

The first few lines provide the usual summary statistics (the mean, variance, standard deviation, standard error of the mean, and count of the number of observations) for each group. The program gives the output for both kinds of unpaired t tests (you don't even have to ask):

The classic Student t test (which assumes equal variances)

The Welch test (which works for unequal variances)

For each test, the output shows the value of the t statistic, the p value (which it calls probability), and the degrees of freedom (df), which, for the Welch test, might not be a whole number.

The program also shows the difference between the means of the two groups, the standard error of that difference, and the 95 percent confidence interval around the difference of the means. The program leaves it up to you to use the results from the appropriate test (Student t or Welch t) and ignore the other test's results.

But how do you know which set is appropriate? The program very helpfully performs what's called an F test for equal variances between the two groups. Look at the p value from this F test:

If p > 0.05, use the "Assuming equal variances" results.

If p

In this example, the F test gives a p value of 0.373, which (being greater than 0.05) says that the two variances are not significantly different. So you can use the classic equal variances t test, which gives a p value of 0.4353.

This p value (being greater than 0.05) says that the means of the two groups are not significantly different. In this case, the unequal variances (Welch) t test also gives a nonsignificant p value of 0.4236 (the two t tests often produce similar p values when the variances are nearly equal).